Tuesday, February 10, 2026

Beyond Whiteboard Interviews: Why Real Coding Assessments Produce Better Hires

Picture this: a senior engineer with eight years of experience, a track record of shipping production systems at scale, and deep expertise in distributed architecture. She sits down for a technical assessment and blanks on implementing a red-black tree from memory. She has used red-black trees in production exactly zero times. She has, however, built a real-time event processing pipeline that handles two million messages per second. She does not get the job.

This scenario plays out thousands of times a day. Whiteboard-style assessments have become the default technical hiring method not because they work, but because they became a habit. And that habit is costing engineering organizations their best candidates.

The Problem with Whiteboard-Style Coding Assessments

Whiteboard assessments test a very specific skill: writing syntactically plausible pseudocode from memory. That skill has almost no correlation with the actual work of software engineering.

Real engineering involves an IDE with syntax highlighting, autocomplete, and inline documentation. It involves running code, reading error messages, and iterating. No professional engineer writes production code from memory on a blank surface.

The problems compound:

- False negatives dominate. Talented engineers who experience anxiety in artificial settings get filtered out. The format rewards candidates who have practiced whiteboard-specific techniques, not those who write excellent production code.

- Memorization over problem-solving. When candidates cannot run their code, assessments reward those who memorized algorithm implementations rather than those who can reason through problems and debug effectively.

- No standardization. Two reviewers evaluating the same answer will score it differently. Without a consistent rubric and execution environment, every assessment is a different test.

- Pseudocode reveals nothing about code quality. You cannot assess error handling, edge case coverage, or code organization from pseudocode.

What Candidates Actually Need: A Real Development Environment

If you want to know whether someone can write good code, give them a real coding environment and ask them to write good code.

A coding assessment should replicate the conditions under which engineers actually work. That means a proper editor, real execution, meaningful feedback through test cases, and support for the languages candidates actually use.

The Monaco Editor: A Professional IDE in the Browser

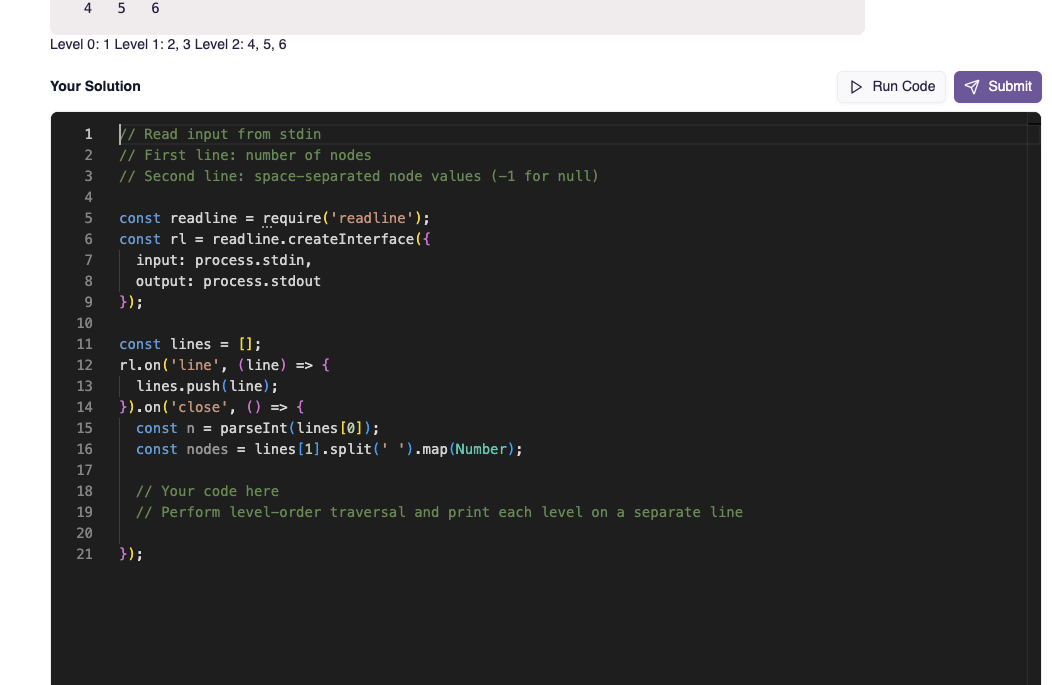

The coding environment at the center of nirn.ai's technical assessments is powered by the Monaco Editor, the same engine that drives Visual Studio Code. If your candidates use VS Code daily, they will feel immediately at home.

Monaco provides syntax highlighting, intelligent autocomplete, bracket matching, code folding, multi-cursor editing, and keyboard shortcuts that experienced developers rely on instinctively. This is not a textarea with monospace font. It is a professional-grade editor running in the browser, requiring no installation from the candidate.

Twelve-Plus Languages, One Consistent Experience

Candidates can write solutions in Python, JavaScript, Java, C++, Go, Ruby, PHP, C#, TypeScript, Kotlin, Swift, or Rust. A backend role using Go should not force candidates to solve problems in JavaScript. A data engineering role should let candidates use Python. Matching the assessment language to the job produces more accurate signal.

Real Execution with Secure Sandboxing

Every code submission runs in a secure sandbox powered by Judge0, an industry-standard code execution engine. Candidates can run their code against test cases, see output, read error messages, and iterate, exactly as they would during real development.

The execution environment includes configurable resource limits. CPU time can be set up to 300 seconds and memory up to 512MB. A string manipulation warm-up might get 5 seconds and 128MB. An algorithm problem involving graph traversal might get 30 seconds and 256MB. The constraints are part of the problem design.

Test Cases: Visible, Hidden, and Scored

The test case system is where a coding assessment moves from "can they write code" to "can they write correct, robust code."

- Visible test cases show the candidate the input, expected output, and actual output of their solution. These serve as a debugging tool, mirroring real development where engineers run tests, read failures, and fix issues.

- Hidden test cases verify edge cases, boundary conditions, and performance requirements without revealing the specifics. A candidate might pass all visible tests with a brute-force solution but fail hidden tests that require efficient handling of large inputs.

Each test case carries an individual score, so partial credit is built in. A candidate who passes seven out of ten test cases gets credit for what they accomplished rather than a binary pass or fail. Two candidates who both "partially solved" a problem might score 70% and 40%, a distinction binary grading would erase entirely.

Auto-scoring eliminates reviewer subjectivity for the objective portion. The code either passes the test case or it does not.

One Assessment, Multiple Dimensions

Coding ability is necessary but not sufficient. nirn.ai's assessment platform combines multiple question types into a single evaluation:

- Multiple-choice questions screen for foundational knowledge: language semantics, data structures, algorithmic complexity.

- Coding questions assess practical implementation skills in a real editor with real execution.

- Video response questions evaluate communication ability and the capacity to explain technical concepts clearly.

- Open-ended questions test architectural reasoning and written communication.

One candidate session covers multiple evaluation dimensions. Engineering managers get a complete picture without scheduling four separate interview rounds.

Proctoring Built In

Every assessment on nirn.ai includes integrated proctoring. Screen recording captures the entire coding session, so reviewers can watch how a candidate approached the problem, not just the final submission. Browser monitoring tracks copy-paste events, tab switches, and other activities that may indicate external assistance. All of this works without separate setup or additional cost.

Better Candidate Experience, Better Signal

A coding assessment with a real IDE is not just better for employers. It is better for candidates. No software installation. A familiar editor with the features they depend on. Clear instructions and visible test cases that help them understand what is expected. A standardized environment that does not change depending on which reviewer they happen to get.

When candidates perform in conditions that reflect actual work, you learn what they can actually do. When candidates perform in artificial conditions, you learn how well they prepared for artificial conditions.

Better Assessments Produce Better Hires

Every false negative in your hiring pipeline is a strong engineer who went to a competitor. Every false positive is a costly mis-hire. Whiteboard-style assessments, with their artificial constraints and subjective evaluation, maximize both error types.

A coding assessment platform built around a real development environment, objective test case scoring, and multi-dimensional evaluation reduces these errors systematically. Candidates show you what they can build. Scoring reflects what they actually produced. The decision is based on evidence, not impressions.

If your team is still relying on whiteboard-style assessments, consider what you are optimizing for. If the answer is "hiring engineers who write excellent production code," give them a real coding environment and find out.