Wednesday, February 25, 2026

From Job Description to Technical Assessment in Minutes: The AI-Powered Hiring Advantage

It is 9 AM on a Monday. Sarah, a senior recruiter at a mid-sized SaaS company, has three open engineering roles to fill this quarter. Before she can screen a single candidate, she needs to build three separate technical assessments, each tailored to a different role, skill set, and seniority level. She opens a blank document, pulls up the job descriptions, and starts the tedious work of sourcing questions, calibrating difficulty, and writing scoring rubrics. By the end of the day, she has completed exactly one assessment. The other two will have to wait.

This scenario plays out thousands of times every week. For teams hiring five, ten, or fifty people a month, the manual approach to assessment creation is a bottleneck that slows down the entire pipeline. Time-to-hire stretches, top candidates accept offers elsewhere, and recruiters burn out on work that feels more like data entry than talent strategy.

There is a better way. AI-powered assessment creation transforms the process from a day-long effort into a focused, fifteen-minute workflow. Instead of starting from a blank page, you start from the job description you have already written, and the AI does the heavy lifting from there.

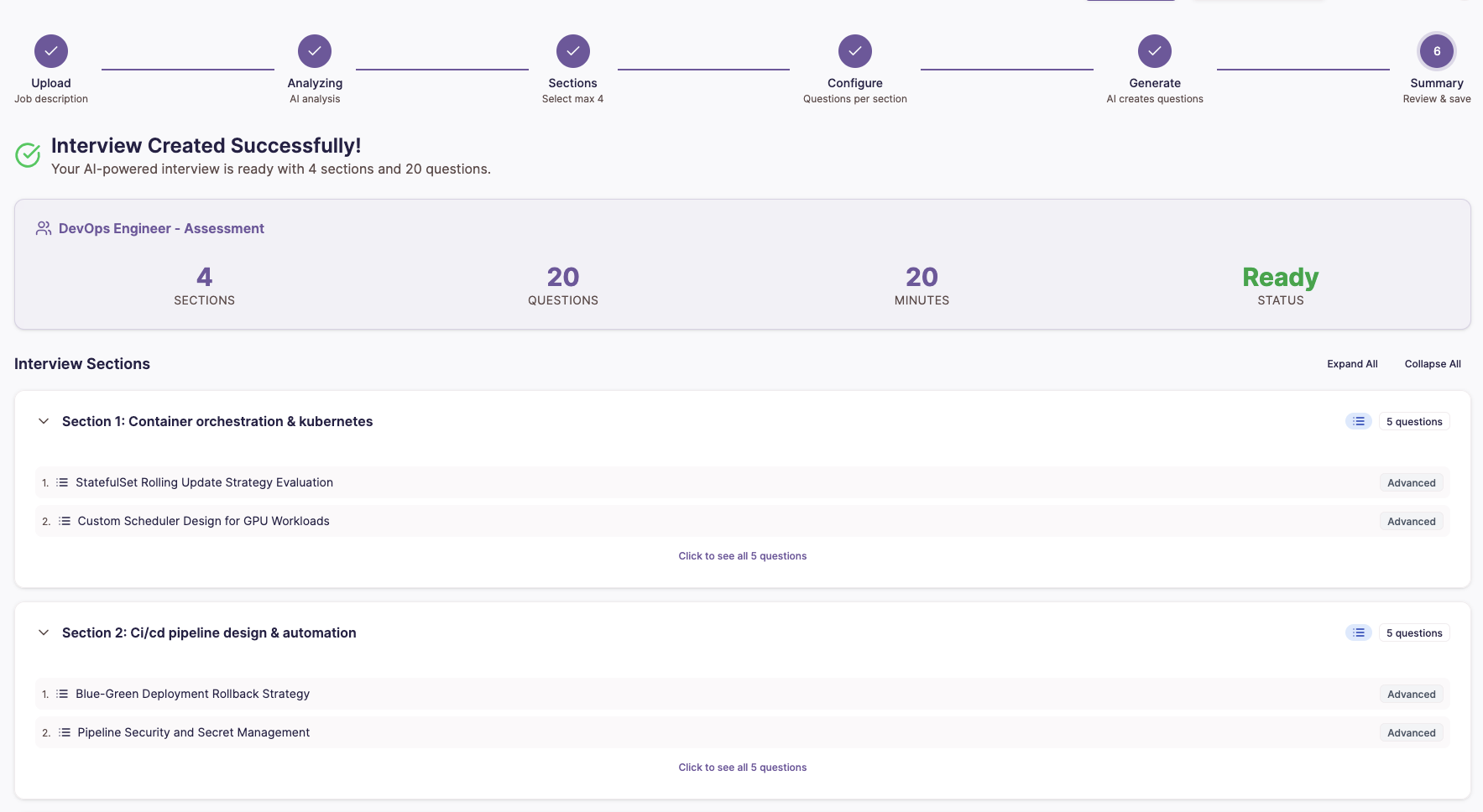

How AI Assessment Creation Actually Works

Let us walk through the real workflow step by step using a concrete example: hiring a Senior Full-Stack Developer with experience in React, Node.js, PostgreSQL, and cloud infrastructure.

Step 1: Upload or Paste the Job Description

You start with what you already have. Paste the job description directly into the platform, or upload it as a file. No special formatting required. The AI works with the same JD you posted on your careers page.

Step 2: AI Analyzes Skills and Competencies

Within seconds, the AI parses the job description and identifies technical skills, seniority signals, and competency areas. For our Senior Full-Stack Developer role, it might extract:

- Frontend: React, TypeScript, state management, responsive design

- Backend: Node.js, REST API design, authentication patterns

- Database: PostgreSQL, query optimization, schema design

- Infrastructure: Cloud deployment, CI/CD, containerization

- Soft skills: System design thinking, technical communication

This is not keyword matching. The AI understands that a "senior" role implies system design capability, that "ownership of features end-to-end" signals full-stack depth, and that "collaborate with cross-functional teams" means communication skills matter.

Step 3: AI Suggests Assessment Sections

Based on the extracted competencies, the AI proposes a structured assessment with distinct, timed sections:

- Section 1: React and Frontend Fundamentals (20 minutes)

- Section 2: Backend API Design with Node.js (25 minutes)

- Section 3: Database and SQL Proficiency (15 minutes)

- Section 4: System Design Challenge (30 minutes)

- Section 5: Communication and Problem-Solving (10 minutes)

Each section comes with a recommended time allocation and a suggested pass/fail threshold, both adjustable.

Step 4: AI Generates Calibrated Questions

This is where the real time savings happen. The AI generates questions for each section, calibrated to the difficulty level appropriate for the role's seniority. It draws on multi-provider AI models, including OpenAI, Anthropic Claude, and Google Gemini, selecting the best-fit model for each question type and falling back automatically if one provider is unavailable.

Questions are generated with awareness of Bloom's Taxonomy, ensuring the assessment tests not just recall but application, analysis, and synthesis. For the React section, you might see multiple-choice questions on hook behavior, a coding challenge that asks the candidate to refactor a component, and a conceptual question about rendering performance.

Step 5: Human Review, Edit, and Approve

No AI-generated question ever reaches a candidate without a human approving it. You review each question, edit the wording, adjust difficulty, swap out questions you do not like, or add your own. The AI gives you a strong starting point. You provide the judgment and final quality check.

The entire process, from pasting the job description to publishing the assessment, typically takes ten to fifteen minutes.

Five Question Types for Complete Candidate Evaluation

A technical assessment that only tests one dimension is an incomplete assessment. The platform supports five distinct question types.

Multiple Choice (MCQ)

Auto-scored questions that test foundational knowledge and conceptual understanding. Effective for quickly filtering candidates on must-have knowledge. Results are available the moment a candidate submits.

Coding Challenges

Candidates write and run real code in an in-browser editor powered by the Monaco Editor, the same engine behind Visual Studio Code. The platform supports more than twelve programming languages, including Python, JavaScript, TypeScript, Java, C++, Go, Ruby, and Rust. Code is executed against predefined test cases, with configurable time and memory limits. This is a working development environment where candidates solve real problems at their own pace.

Video Responses

Candidates record themselves answering behavioral, communication, or technical explanation prompts. Configurable duration and retake limits. Invaluable for roles where communication and presentation matter.

Open-Ended Questions

Free-text responses for conceptual, analytical, or opinion-based questions. These reveal how candidates think and communicate in writing.

System Design

Architecture and design thinking questions that test scalability reasoning, trade-off analysis, and real-world engineering judgment. Essential for senior and lead roles.

By combining these five question types within a single assessment, you evaluate candidates across the full spectrum of technical ability.

Can AI Really Create Good Questions?

This is the right question to ask, and the honest answer is nuanced. AI-generated questions are not perfect out of the box. What they are is remarkably good starting points that dramatically reduce the effort required to build a high-quality assessment.

Three mechanisms keep quality high.

First, the human-in-the-loop model means every question is reviewed before it reaches any candidate. The AI proposes. The human edits or approves. This is not a fully automated pipeline.

Second, calibration analytics provide feedback after candidates complete assessments. If a section's average score is 95%, the questions are probably too easy. If every candidate fails a particular question, it may be poorly worded. Score distributions surface these patterns so you can refine future assessments with data, not guesswork.

Third, the Smart Question Bank grows over time. Questions that perform well get tagged, categorized by difficulty from Beginner to Expert, and organized for easy reuse. You can clone a proven assessment for a similar role and adjust only what needs to change. The more you use the system, the stronger your question library becomes.

The Compound Effect on Your Hiring Pipeline

The obvious benefit is speed. Assessments that took a full day now take fifteen minutes. But the second-order effects are where the real value compounds.

When assessment creation is fast, you create more tailored assessments. Instead of using one generic "software engineer" test for three different roles, you build role-specific assessments that actually differentiate between a frontend specialist, a backend developer, and a DevOps engineer. Better assessments produce more accurate signal, which means fewer bad hires and fewer wasted interview hours.

When assessment creation is easy, more people on your team can contribute. A hiring manager can draft an assessment between two meetings and hand it off for polish. The bottleneck shifts from "who has time to build this" to "who should review this."

When assessment results include calibration data, your assessments get better over time. Each hiring cycle feeds insights back into the next one.

What This Means for Your Team

If your team is hiring five to fifty people per month, the math is straightforward. Assume each assessment takes six hours to build manually. At ten open roles, that is sixty hours of recruiter time per cycle. Cut that to fifteen minutes per assessment, and you reclaim nearly all of it for the work that actually moves the needle on hire quality: candidate relationships, employer branding, and strategic planning.

The shift from manual to AI-assisted assessment creation is not about replacing human judgment. It is about freeing human judgment from the mechanical parts of the process so it can focus on the decisions that matter most.

AI handles the first draft. You make the final call. And the assessment that used to take all day is live before your first coffee gets cold.

Ready to see how fast assessment creation can be? Try building your first AI-generated technical assessment on nirn.ai. Paste a job description, review the AI's suggestions, and publish a role-specific assessment in under fifteen minutes.