Thursday, March 5, 2026

AI Interview Cheating Is Surging: How to Design Assessments That Stay One Step Ahead

Remote hiring broadened talent pools and shortened hiring cycles. But it also opened the door to a problem growing faster than most hiring teams realize: AI-powered cheating during online assessments and interviews.

This is not the old problem of candidates Googling answers in a separate tab. A new generation of AI interview assistance tools operates at the operating system level, creating invisible overlays that feed candidates real-time, AI-generated answers to technical questions, coding challenges, and even behavioral prompts. These tools are commercially available, actively marketed, and increasingly sophisticated.

If you are responsible for hiring technical talent, this article walks you through how these tools work, why traditional proctoring falls short, and what a defensible assessment integrity strategy actually looks like in 2026.

The Scale of the Problem

By late 2025, industry analyses indicated that roughly 35% of candidates showed signs of cheating during online technical assessments. That number is likely conservative, because it only captures candidates whose behavior was flagged by existing detection methods. Candidates using the most advanced tools may not be triggering any alerts at all.

The AI cheating ecosystem has matured rapidly. What started as candidates running ChatGPT in a separate window has evolved into purpose-built desktop applications that listen to audio, transcribe questions in real time, generate contextually appropriate answers, and display them on screen in a way that is invisible to screen capture and screen sharing software.

For hiring managers and talent acquisition leaders, this represents a genuine threat to assessment integrity. When a significant percentage of candidates may be receiving AI assistance, the signal-to-noise ratio of your entire hiring pipeline degrades. You risk passing on honest, capable candidates in favor of those who are simply better at using cheating tools.

How AI Cheating Tools Actually Work

Understanding the mechanism is essential to understanding why this problem is difficult to solve with traditional approaches.

The Audio Path

The tool captures audio from the interview or assessment through system audio or microphone input. It transcribes questions in real time, sends them to a large language model, and displays the AI-generated response as a text overlay on the candidate's screen. The candidate reads the answer while appearing to look at the camera.

The Visual Path

For coding assessments, the tool captures the problem statement using optical character recognition, generates a solution through an AI model, and displays the code on an overlay. The candidate then types the solution as if working through it independently.

Why Browser-Based Detection Cannot Catch Them

Here is the critical insight: these overlay tools use operating system-level APIs specifically designed to exclude certain windows from screen capture. This is not an exploit. It is a legitimate OS feature. When a proctoring system captures the candidate's screen, the overlay simply does not appear. The screen recording looks completely normal.

Any proctoring approach that relies solely on observing what is on the candidate's screen is fundamentally limited against these tools.

Why Traditional Proctoring Is Not Enough

Most platforms offer proctoring as an optional add-on, typically limited to screen recording and basic browser lockdown. Against AI overlay tools, these traditional measures are insufficient:

- Screen recording does not capture invisible overlays

- Browser lockdown does not prevent OS-level applications from running

- Periodic camera snapshots miss subtle behavioral patterns

- Optional proctoring creates a two-tier system where only some candidates are monitored

The uncomfortable truth is that you cannot win the overlay detection arms race from within a browser. Every detection method at the browser level can be circumvented by a tool operating at the OS level.

A Multi-Layered Defense Strategy

If direct detection of overlay tools is fundamentally limited, what does a defensible strategy look like? The answer is layered defense: combining traditional proctoring with behavioral analysis and intelligent assessment design.

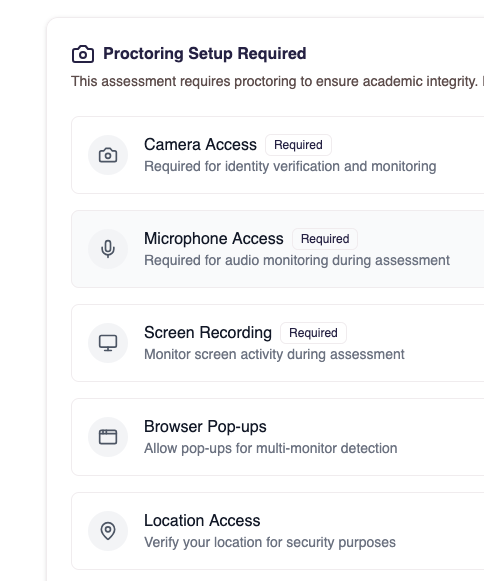

At nirn.ai, we treat proctoring as a core platform capability rather than an optional add-on. Every assessment includes multiple monitoring layers.

Always-On Monitoring

Proctoring is built into the assessment experience by default. This includes continuous browser monitoring, camera recording with snapshots analyzed through computer vision, screen recording, and fullscreen enforcement.

Computer Vision Analysis

Camera feeds are analyzed for behavioral indicators. Head pose tracking detects when candidates repeatedly look away in patterns consistent with reading from an external source. Object detection identifies phones, tablets, secondary screens, and earbuds in the candidate's environment. Multi-monitor detection uses multiple browser-based methods to identify additional displays.

Browser Event Tracking

The platform monitors tab switches, copy-paste actions, right-click events, and keyboard shortcuts. Configurable violation thresholds allow organizations to set their own tolerance, with auto-submission triggered when thresholds are exceeded.

These monitoring layers are necessary but not sufficient on their own. The real strategic advantage comes from behavioral analysis.

Behavioral Analysis: The Sustainable Strategy

If you cannot reliably detect the tool, detect the behavior the tool produces. This is the key insight.

When a candidate uses AI assistance, they produce behavioral patterns that differ from a candidate working independently, regardless of how invisible the tool is.

Response Timing Patterns

AI introduces processing latency. An AI-assisted candidate shows suspiciously consistent timing across questions of varying difficulty. A genuine candidate shows variable response times: answering familiar topics quickly and taking longer on challenging ones.

Typing Dynamics

Candidates reading from an overlay produce distinctive burst typing patterns: read a chunk, type rapidly, pause, read the next chunk. Genuine problem-solving involves pauses for thinking, backtracking, and restructuring.

Eye Movement and Gaze Patterns

Reading from an overlay produces horizontal gaze sweeps. This differs from natural eye movement patterns of recall, where gaze tends to shift upward or become unfocused during thinking.

Answer Depth and Consistency

AI-generated answers tend to be competent but surface-level. They lack the depth and personal experience that characterize genuine expertise. More importantly, AI-assisted answers fail to maintain consistency across related questions.

The sustainable strategy for assessment integrity is not trying to detect every cheating tool. It is designing assessments and analysis systems that make the behavioral signatures of AI assistance visible, regardless of how the assistance is delivered.

Assessment Design Practices That Defeat AI Assistance

Technology is only part of the solution. How you design your assessments matters enormously.

Use Conversational Depth Probing

Never accept a single answer as sufficient. Follow up with questions like "Why did you choose that approach over the alternatives?" AI tools generate answers to the question asked, but struggle with iterative, contextual follow-ups that require genuine understanding.

Use Code Modification Challenges

Instead of asking candidates to solve a problem from scratch, present them with existing code and ask them to modify it. "Refactor this to use recursion instead of iteration" or "Add error handling for these edge cases." Code modification challenges are difficult for AI assistance tools because they require contextual understanding of existing code, not just generating new solutions.

Build Contextual Cross-References

Reference earlier answers later in the assessment. "You mentioned earlier that you prefer event-driven architecture. How would you apply that principle to this problem?" This tests whether the candidate holds the mental model implied by their previous answers.

Require Narration During Video Responses

For video-based questions, ask candidates to explain their thought process as they work through a problem. Genuine candidates naturally narrate their reasoning. Candidates relying on AI assistance produce a different pattern: their explanation does not match the sophistication of their written answers.

Include Canary Questions

Reference a fictional framework or methodology and ask about the candidate's experience with it. A genuine candidate will say they are not familiar with it. An AI tool will confidently generate a plausible-sounding but fabricated answer.

Randomize and Create Variants

Use question pools with randomized ordering and multiple variants. Time-box individual questions rather than the entire assessment to prevent candidates from spending excessive time on AI-assisted answers.

The Honest Reality of the Arms Race

No assessment platform can guarantee it catches every instance of cheating. Anyone who claims otherwise is not being honest. The AI cheating tool ecosystem is evolving rapidly, and detection must evolve with it.

What a responsible platform can do is make cheating significantly harder, make the behavioral signatures more visible, and give hiring teams the data they need to make informed decisions. The combination of always-on monitoring, behavioral analysis, and intelligent assessment design creates a multi-layered defense that addresses the problem from fundamentally different angles.

At nirn.ai, our approach is to continuously evolve these detection layers. As AI assistance tools become more sophisticated, behavioral analysis becomes more important, not less.

What Hiring Leaders Should Do Now

- Audit your current proctoring setup. Is it optional or always-on? Does it go beyond screen recording?

- Redesign assessments for depth. Move toward conversational, iterative assessments that test understanding rather than recall.

- Train interviewers on follow-up techniques. The most effective cheating detection often happens through skilled interviewing, not technology alone.

- Evaluate behavioral analysis capabilities. Ask specifically about timing analysis, typing dynamics, and gaze monitoring.

- Accept that this is ongoing. Assessment integrity requires continuous investment and adaptation.

The rise of AI cheating tools does not mean online assessments are broken. It means the bar for what constitutes a credible assessment platform has risen significantly. Organizations that take a multi-layered, behaviorally-informed approach will continue to make confident hiring decisions. Those that rely on legacy proctoring methods will find their signal increasingly degraded.

The future of hiring integrity belongs to platforms and processes designed for this new reality.